When Vision-Language Models Move to the Edge, AI Finally Becomes Operational

Most AI conversations still revolve around accuracy, parameters, or model size.

But for industrial and infrastructure teams, the real question is simpler:

Can AI make a decision fast enough, on site, without relying on the cloud?

This is where Vision-Language Models (VLMs) fundamentally change the value of Edge AI—not by adding more intelligence, but by making intelligence usable in real operations.

Vision Alone Is No Longer Enough

Computer vision has been widely adopted across manufacturing, logistics, and smart infrastructure. Cameras detect defects, identify objects, and trigger alarms. Yet most systems still suffer from a familiar limitation:

They see, but they don’t understand context.

A traditional vision system might detect an abnormal pattern, but it cannot:

- Explain why the anomaly matters

- Connect what it sees to operating procedures

- Respond to human questions in natural language

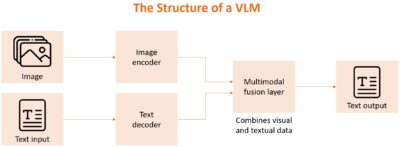

VLMs close this gap by linking visual perception with language-based reasoning. Instead of outputting labels or scores, they generate meaning—bridging machine perception and human intent.

Why the Cloud Is the Wrong Place for VLMs in Industrial Environments

VLMs are often associated with large, cloud-based AI models. But in real-world industrial scenarios, the cloud introduces friction rather than value.

Common constraints include:

- Latency that delays safety-critical decisions

- Bandwidth costs from continuous video streaming

- Data sovereignty and privacy concerns

- Operational risk when connectivity is unstable

For applications such as visual inspection, situational awareness, or on-site diagnostics, waiting for the cloud is not an option.

This is why VLMs become far more impactful when deployed at the edge.

Edge AI Turns VLMs into Actionable Intelligence

Running VLMs on Edge AI platforms shifts AI from analysis to execution.

At the edge, VLMs can:

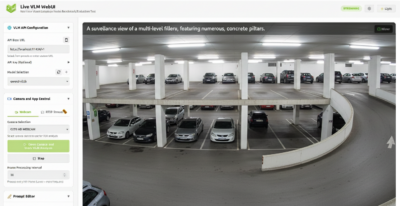

- Interpret visual scenes locally and in real time

- Respond to natural language queries from operators

- Correlate visual input with operational rules or manuals

- Trigger immediate actions without cloud dependency

Instead of asking, “What does the camera detect?” teams can ask:

- “Is this machine operating within safe limits?”

- “What changed compared to normal operation?”

- “Show me where the risk is and why.”

This transforms AI from a monitoring tool into a decision-support system.

Operational Scenarios Where VLMs at the Edge Matter

The value of edge-based VLMs becomes clear in real deployments:

Manufacturing & Automation

- Visual inspection systems that explain defects, not just flag them

- Faster root-cause analysis with contextual reasoning

- Reduced reliance on highly specialized AI engineers on-site

Smart Infrastructure & Cities

- Local interpretation of complex scenes in traffic or public spaces

- Privacy-preserving video analytics without raw data leaving the site

- Faster response to abnormal or unsafe situations

Logistics & Warehousing

- Visual understanding combined with task-level instructions

- More flexible human–machine collaboration

- Adaptation to changing layouts and workflows

In all cases, intelligence is most valuable where the action happens.

Making VLMs Practical at the Edge

Deploying VLMs outside the cloud requires more than just a powerful model. It demands a platform designed for real-world constraints.

Key requirements include:

- Optimized AI performance within limited power and thermal budgets

- Support for AI accelerators and heterogeneous computing

- Secure boot and hardware-based trust for AI workloads

- Industrial-grade reliability for 24/7 operation

This is where purpose-built Edge AI platforms outperform generic compute hardware.

From AI Capability to AI Deployment

The industry is moving beyond asking what AI can do and toward where AI should run. VLMs represent a major step forward in AI capability. Edge AI determines whether that capability can be deployed safely, reliably, and at scale. Together, they form the foundation of intelligent systems that are not only powerful, but operational.

At Emplus, the Edge AI Box is designed to bridge this gap—bringing vision-language intelligence directly to the field. By enabling optimized AI inference, real-time visual analytics, and multimodal understanding at the edge, it allows organizations to turn AI innovation into deployable solutions. Because in industrial environments, intelligence is only valuable when it works on site, in real time, and without compromise.